- Marketing Nation

- :

- Products

- :

- Product Discussions

- :

- Bot or Not? – Are you suffering from ‘bot clicks’?

Bot or Not? – Are you suffering from ‘bot clicks’?

- Subscribe to RSS Feed

- Mark Topic as New

- Mark Topic as Read

- Float this Topic for Current User

- Bookmark

- Subscribe

- Printer Friendly Page

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Bot or Not? – Are you suffering from ‘bot clicks’?

I didn't even get to that part. ![]()

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Bot or Not? – Are you suffering from ‘bot clicks’?

We have seen a similar situation to this thread creeping in on our metrics.

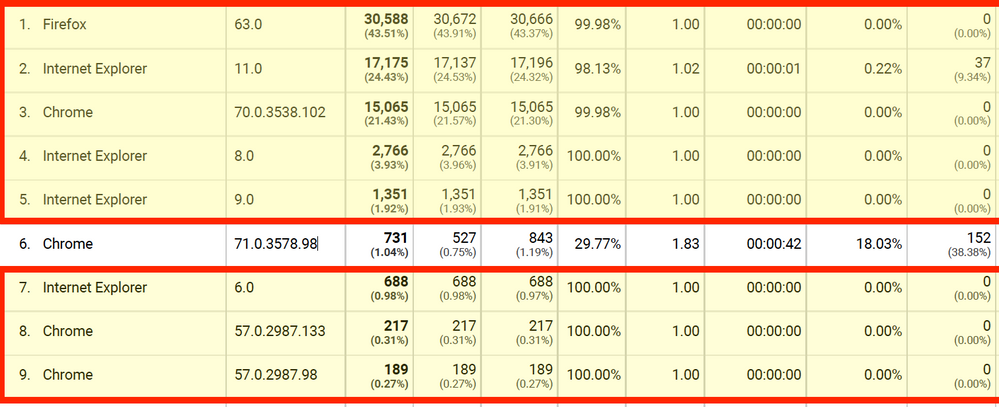

Recently, I decided to break down our email traffic by Browser and Browser version, in accordance with some helpful tips from this article.

Specifically, this part:

The most common fingerprint of a bot visit (within Google Analytics) is very low quality traffic – indicated by 100% New Sessions, 100% Bounce Rate, 1.00 Pages/Session, 00.00.00 Avg Session Duration, or all of the above.

The Criteria:

Looking at traffic using the criteria above (slight deviation in sessions vs new sessions OK), also checking out if they hit a goal completion or not. Traffic is from Q1'19

The Findings:

Of the 70K users/sessions, about 2K of them appear to have actual activity attached to them.

This would indicate about 2.85% of my email clicks were from email traffic. Put another way 97% of our email traffic was likely from these email scanners.

Image Snippet from GA report:

Conclusion:

We're still tackling exactly how to approach reporting of true email traffic, but one thing is for sure: all is not what it seems!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Bot or Not? – Are you suffering from ‘bot clicks’?

That's quite helpful. We have estimated that 70-90% of our clicks are spam, which is hugely disappointing of course.

Sanford Whiteman - is it possible to take the Click Detail from the logs and put it into a table like the above? I've been somewhat suspicious that the Device and Browser Type would be a clue to a click scanner. I bet this is an API pull though.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Bot or Not? – Are you suffering from ‘bot clicks’?

Has anyone seen the numbers for Opens getting skewed as well? Since that number is tracked via an image/pixel, I'm surprised to not hear about skewing of that number.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Bot or Not? – Are you suffering from ‘bot clicks’?

Good question. But remember that the mail scanners are interested in security (not privacy) risks. A 1x1 image wouldn't be a security concern (unlike for example a giant 800x600 image with spamvertising text on it, which would be worth OCRing). So to the degree that a scanner can predict final visual layout the pixel wouldn't be worth fetching.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Bot or Not? – Are you suffering from ‘bot clicks’?

Yep, we haven't seen that behavior.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Bot or Not? – Are you suffering from ‘bot clicks’?

Hi Sanford Whiteman & All -

I just posted this question but realized I did so to an old thread. Meant to post it here.

I'm wrestling with this issue, too, for my clients. It makes it really hard to evaluate the impact of emails unless they lead to a form. My latest idea is to only change someone's program status to clicked if they have clicked at least twice (or 3 times) on any of the links. E.g.:

Filter 1

Clicks link in email, email is A, link is A

Min number of times = 2

Filter 2

Clicks link in email, email is A, link is B

Min number of times = 2

etc.... a filter for each link (and filter logic is "any"). A bit of a nuisance to build when emails have multiple links. But what do you think? Wouldn't this tactic have a better chance of weeding out link scanners? I'm assuming, of course, that the first click is the link scanner but if there's a 2nd click it's a person.

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Bot or Not? – Are you suffering from ‘bot clicks’?

Hey Denise,

I get what you're going for here with this solution, and it's certainly an option to consider, but now you're docking those first-time REAL clicks. Instead of weeding out bot clicks, you're also weeding out actual, real life engagement.

For example, we've used some raw data from the database along with time stamps on the activity logs to try to identify frequent known-bot-click-domains (we're entirely B2B), and try to weed those out of reporting. We don't NOT send to those people, and we count the bot clicks overall, but we don't adjust lead score using those activities for contacts on those domains. We know we're filtering out some real activity, but we have decided that being confident in our leads being passed to sales is more important than having 100% accurate engagement numbers (so long as we're still driving value for the org as a whole).

Definitely not an easy problem to solve and I don't expect it to ever go away. Best of luck!

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Bot or Not? – Are you suffering from ‘bot clicks’?

Hi Chris,

Yes, you're right that I'd be docking (or just not crediting) the first-time real clicks. I was thinking of that as interim solution until I've devised a good method of identifying the known-bot-click-domains in our database. Since we mostly target large companies I have the impression just looking though "clicked" lists that most of them employ link scanners so I think I'm okay with erring on the side of under-reporting.

How do you handle clicks in terms of email program success status? That is, does "clicked" count as success?

Thank you for your input!

Best,

Denise

- Mark as New

- Bookmark

- Subscribe

- Mute

- Subscribe to RSS Feed

- Permalink

- Report Inappropriate Content

Re: Bot or Not? – Are you suffering from ‘bot clicks’?

Hey Denise,

Depending on the program and audience (we see large pockets of auto-clicks with specific audience segments), we may use the person's web visit as the benchmark for program success. Many in this thread have shared that they see bots triggering web page views as well, but I've done a ton of testing with our audience and Marketo data, and I do not experience that within our instance at this time.

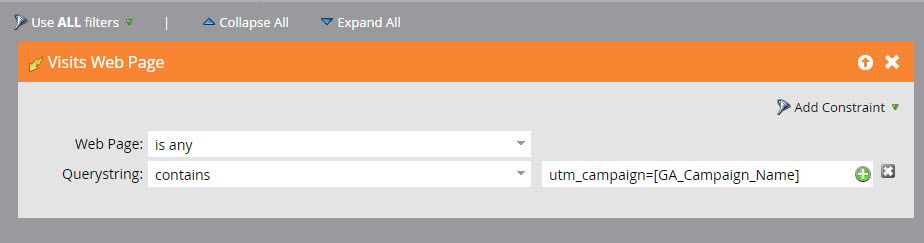

The way I do this on a program-by-program basis is by using a Visits Web Page trigger program using the querystring of page visits to the website. Since we tag all of our emails with Google Analytics tracking params, we basically just put that program's campaign ID into the querystring constraint, then any page visited to the site with that in the querystring triggers the program to set success. This way if you have 3 links in your email to different pages, the trigger is just looking for anyone landing on the site with your GA tags in place for that program. I'm also playing around with tokenizing by appending program.id to all links, then dynamically searching for that in my trigger but haven't built that out fully as of yet.

Couple things to note: 1) You will see less page visits than clicks. Every time. Just natural drop off of users quick bouncing combined with the bot click problem, that's normal. 2) Your experience with bots also triggering this page view may differ from mine. I've done lots of testing, and it appears to be fairly clean for the small group I use this approach with in our database as far as I can tell.

Good luck!

- Copyright © 2025 Adobe. All rights reserved.

- Privacy

- Terms of use

- Do not sell my personal information

Adchoices

.png)